The Guided Verification Problem.

Most "human-in-the-loop" UX is rubber-stamping. The pattern that actually works looks different — and it's the whole game for AI products.

I built three versions of the same expense tracker. The first one looked great and was silently wrong. The second one was correct and unusable. The third one is the version I now think every AI product team should be building.

This is what each version got wrong, what the third version got right, and why I think this pattern matters more than the model you pick.

Full Automation. Silently Wrong.

The first version was the obvious AI play. Plaid1 pulls every transaction from every account. The model categorizes each one — rental property, business, personal, charity. The user gets a clean report at the end of the month.

It looked great. It was wrong about the things that mattered.

A $2,400 plumbing repair on a rental property, miscategorized as "office supplies."2 A $180 dinner with a prospective Pilates client, dropped into "personal." A reimbursement for a deposit, double-counted because the model didn't recognize the reverse pair.

None of these errors fired an alert. There was nothing to alert on — the model returned a confident category for every transaction. The output was a beautiful, perfectly-formatted report containing dozens of expensive mistakes, and you only found them at tax time.

The deeper problem isn't the accuracy rate. It's that the user has no surface to engage with the model's reasoning. If the only way to catch errors is to re-do the categorization yourself, the AI hasn't actually saved you any work. You've just shifted from doing the task to auditing it — which is harder.

Fully Manual. Soul-Crushing.

So I flipped it. Version 2 imported transactions and put them in a queue. The user sat at a table and clicked through every row. No AI. Just a fast-keyboard-driven categorization tool.

Accuracy: perfect. Adoption: zero.

Nobody — including me, the person who built it — wanted to grind through 200 transactions on the first of every month. The act of categorizing the tenth coffee shop transaction in a row is the kind of work AI is supposed to obviate, not formalize.

The lesson here was less obvious than the V1 lesson. The model wasn't useless — the V1 model had been right roughly 80% of the time.3 Throwing all of it away to recover trust was the wrong trade. What I needed was a way to keep the speed of automation and the trust of manual review.

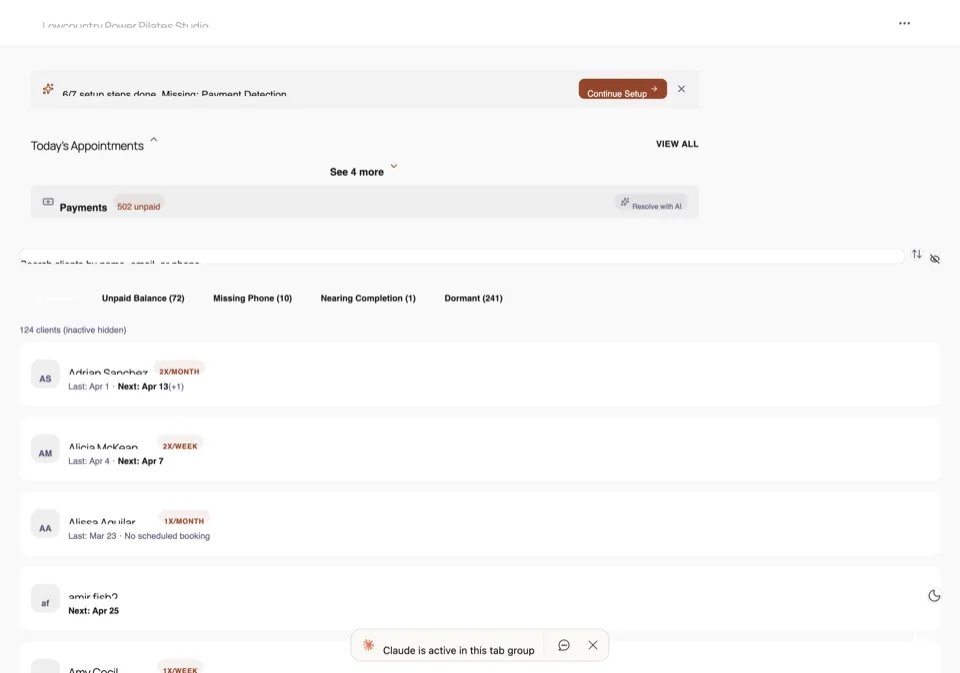

Guided Verification.4

Version 3 is the one that works. The system does a first-pass categorization, then walks the user through the result one decision at a time — but it owns the walk. It's not a list with checkboxes. It's a flow.

Each transaction comes up on a card showing four things:

- What it did — the category it picked.

- Why — one sentence of reasoning, in plain English. "Marked rental because the merchant matches a vendor you've previously paid from this property's account."

- How confident — a flag if the signal was weak or contradictory.

- The choice — confirm, change, or skip. One keystroke each.

The flow is ordered: hard-first5 — high-uncertainty cards first, easy ones last. Often by the time the user gets through the uncertain ones, the system can confirm-by-default the rest. 100 transactions verified in under 10 minutes. Down from two hours, with the same accuracy as V2.

That sentence is the whole pattern. In V1, the AI did the work and the user was supposed to audit it. In V2, the user did the work and the AI was absent. In V3, the AI does the work and the audit, and the user supplies one bit of judgment per uncertain decision. Their job is to react, not to scan.

"Human In The Loop" Is Doing A Lot Of Work In That Sentence.

Every AI product team I talk to says they have a human in the loop6. Almost none of them mean what V3 means. Most mean V1: the model produces output, the human sees it, the human is theoretically responsible for catching errors. That's not a loop. That's a dump.

A real loop has three properties:

- The system surfaces its own uncertainty. Not just confidence scores — readable explanations of why a particular call is hard. "Two vendors with similar names. Last time you said personal."

- The system orders the review for the user. Hardest first. Cheap signals last. Don't make the user prioritize.

- The system collapses to single-bit decisions. If a card asks the user to think for more than a few seconds, the design is wrong. Either pre-resolve more, or break the decision into parts.

I've started using "guided verification" as the test. If you can't describe how your product does these three things, you don't have a human in the loop. You have a human at the dump.

The Pattern Generalizes.

Once you see the shape, it's everywhere. Code review of AI-generated patches. Reviewing AI-drafted emails. Triaging support tickets. Tagging photos. Every task that looks like "AI does the bulk, human catches the edges" has the same V1 / V2 / V3 trajectory available to it, and almost everyone is still on V1.

The teams I find myself most interested in are the ones building V3 from the start — designing the verification flow before they ship the categorizer. That's a different kind of product spec than most AI-native teams write today, and it's where I think the next few years of differentiation will come from.

If you're building something in this shape and want to compare notes, my email's at the bottom.